This is a trap we all fall into at times.

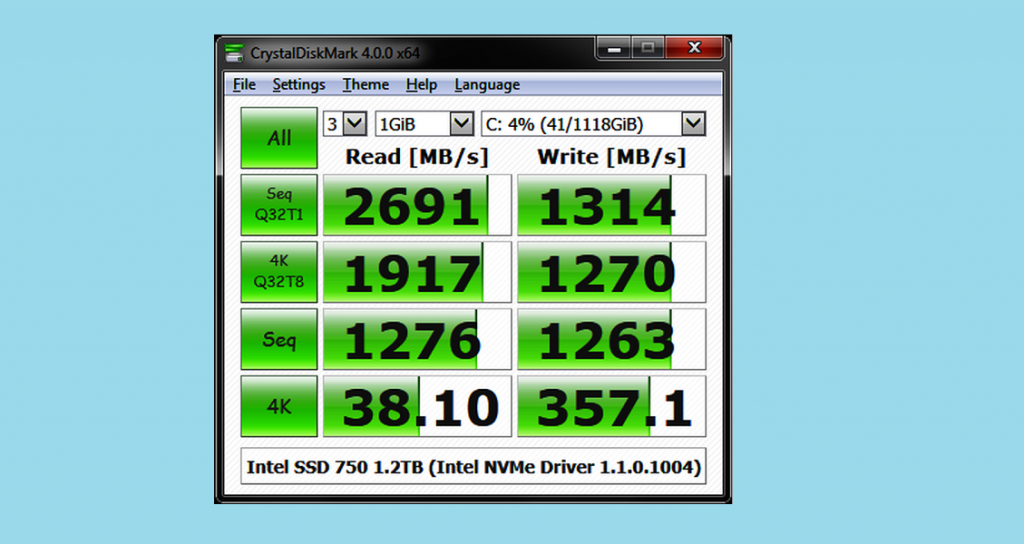

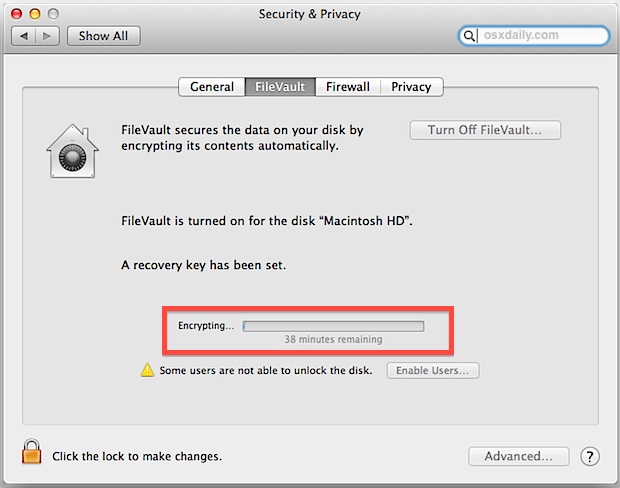

What you can’t do is use individual data taken from the population to make the sort of comparisons you’d make in controlled experiments, because too many of those variables are uncontrolled. onset of write cache exhaustion or thermal throttlingĪs in many other fields, you can design small experiments which control as many of those variables as possible in order to make comparisons, or you can pool data with high dispersion and analyse it statistically.caching/buffering in memory, both on the Mac and in the storage.availability of SLC Write cache on SSDs.variation in negotiated bus/port speeds.other software running which may access the disk during testing.different versions of macOS, kexts, firmware, etc.combinations of hardware, including case/enclosure, cable, host Mac.In principle, all these tests should be deterministic, so with negligible noise or error, but in practice there are a great many other factors which can come into play, including: Having decided what to benchmark, we then need to get its best estimate, either with small dispersion or known variance. And if the test doesn’t explain exactly what it does, we simply can’t trust what it’s doing. So any benchmark which runs crafted code calling low down functions in C doesn’t tell me as much as that using standard FileHandle calls from Swift or Objective-C. That’s important, because some benchmarks use quite different code from that normally used by apps, and features in storage can also be tuned to deliver better benchmark results even though in real use they’re slower. What I want to know, though, is how fast storage will be when in use, typically doing mundane tasks like reading and saving files, and when copying in the Finder. For those in the trade, it’s to show how fast their product is compared with their competitors.

For some, it’s to prove that their purchase choice performs better than those of others. We run benchmark tests for different reasons. This article explains some of the difficulties in interpreting this avalanche of data, and how we can move forward. It's also available for Linux and Mac operating systems, as well as included in a couple of LiveCD/LiveUSB programs.Over the last couple of weeks, I’ve read more benchmarks and other performance measurements on SSDs than I’ve ever seen before, thanks to so many of you who have contributed results from your own tests. The latest version works with Windows 11, 10, 8, 7, and Vista, but there's an outdated edition you can get for older Windows versions.

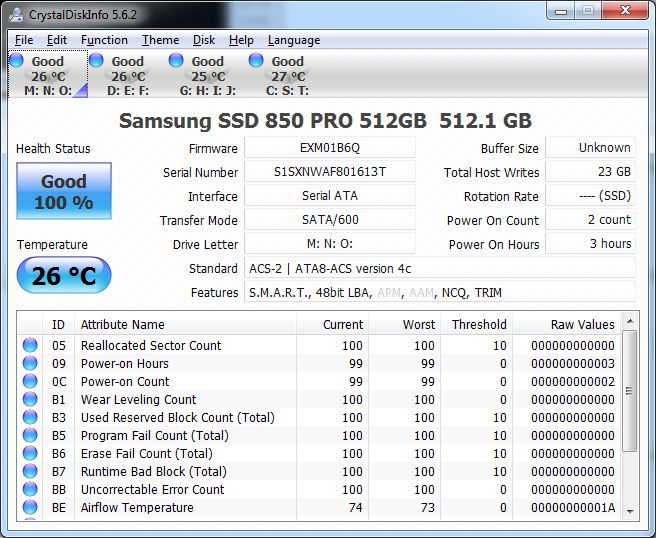

This program can be downloaded for Windows as a portable program or as a regular program with a normal installer. GSmartControl runs three self-tests to find drive faults: Short Self-test takes around 2 minutes to complete and is used to detect a completely damaged hard drive, Extended Self-test takes 70 minutes to finish and examines the entire surface of a hard drive to find faults, and Conveyance Self-test is a 5-minute test that's supposed to find damages that occurred during the transporting of a drive. View and save SMART attribute values like the power cycle count, multi-zone error rate, calibration retry count, and many others. GSmartControl can run various hard drive tests with detailed results and give an overall health assessment of a drive. When exporting information, it includes everything, not just a specific result you want to save Doesn't support every USB and RAID device

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed